Search engines trawl the online, sifting by way of billions of knowledge factors to serve up info in a fraction of a second. The entry to prompt info we’ve come to take as a right is predicated on an unlimited system of knowledge retrieval and software.

Google has been probably the most forthcoming about how its search engine works, so I’ll use it for instance.

At the only degree, search engines like google do two issues.

- Index info. Discover and retailer details about 30 trillion particular person pages on the World Wide Web.

- Return outcomes. Through a classy collection of algorithms and machine studying, determine and show to the searcher the pages most related to her search question.

Crawling and Indexation

How did Google discover 30 million net pages? Over the previous 18 years, Google has been crawling the online, web page by web page. A software program referred to as a crawler — also referred to as a robotic, bot, or spider — begins with an preliminary set of net pages. To get the crawler began, a human enters a seed set of pages, giving the crawler content material and hyperlinks to index and comply with. Google’s crawling software is known as Googlebot, Bing’s is known as Bingbot, and Yahoo makes use of Slurp.

When a bot encounters a web page, it captures the knowledge on that web page, together with the textual content material, the HTML code that renders the web page, details about how the web page is linked to, and the pages to which it hyperlinks.

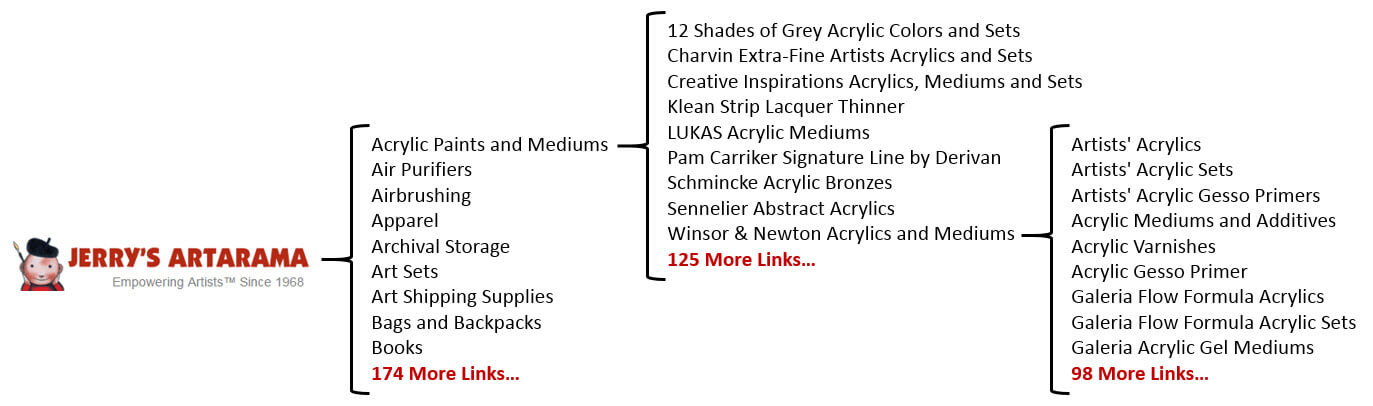

As Googlebot crawls, it discovers increasingly more hyperlinks. The picture under exhibits a really simplistic diagram of a single, three-web page crawl path on Jerry’s Artarama, a reduction artwork provides ecommerce website.

An instance of a easy crawl path on Jerrysartarama.com.

The emblem at left signifies a place to begin on the website’s home page, the place Googlebot encounters 184 hyperlinks: the ten listed and 174 extra. When Googlebot follows the “Acrylic Paints and Mediums” hyperlink within the header navigation, it discovers one other web page. The “Acrylic Paints and Mediums” web page has one hundred thirty five hyperlinks on it. When Googlebot follows the hyperlink to a different web page, reminiscent of “Winsor & Newton Acrylics and Mediums,” it encounters 108 hyperlinks. The instance ends there, however crawlers proceed accessing pages by way of the hyperlinks on every web page they uncover till all the pages thought-about related have been found.

In the method of crawling a website, bots will encounter the identical hyperlinks repeatedly. For instance, the hyperlinks within the header and footer navigation ought to be on each web page. Instead of recrawling the content material in the identical go to, Googlebot may notice the connection between the 2 pages based mostly on that hyperlink and transfer on to the subsequent distinctive web page.

All of the knowledge gathered in the course of the crawl — for 30 trillion net pages — is saved in monumental databases in monumental knowledge facilities. To get an concept of the size of simply one among its 15 knowledge facilities, watch Google’s official tour video “Inside a Google knowledge middle.”

As bots crawl to find info, the knowledge is saved in an index inside the info facilities. The index organizes info and tells a search engine’s algorithms the place to seek out the related info when returning search outcomes.

But an index isn’t like a darkish closet that all the things will get stuffed into randomly because it’s crawled. Indexation is tidy, with found net web page info saved together with different related info, akin to whether or not the content material is new or an up to date model, the context of the content material, the linking construction inside that specific website and the remainder of the online, synonyms for phrases inside the textual content, when the web page was revealed, and whether or not it incorporates footage or video.

Returning Search Results

Results are displayed after you seek for one thing in a search engine. Every net web page displayed is known as a search end result, and the order during which the search outcomes are displayed is called rating.

But as soon as info is crawled and listed, how does Google determine what to point out in search outcomes? The reply, in fact, is a intently guarded secret.

How a search engine decides what to show is loosely known as its algorithm. Every search engine makes use of proprietary algorithms that it has designed to tug probably the most related info from its indices as shortly as potential in an effort to show it in a fashion that its human searchers will discover most helpful.

For occasion, Google Search Quality Senior Strategist Andrey Lipattsev just lately confirmed that Google’s prime three search rating elements are content material, hyperlinks, and RankBrain, a machine studying synthetic intelligence system. Regardless of what every search engine calls its algorithm, the essential features of recent search engine algorithms are comparable.

Content determines contextual relevance. The phrases on a web page, mixed with the context during which they’re used and to pages they’re linked to, determines how the content material is saved within the index and which search queries it’d reply.

Links decide authority and relevance. In addition to offering a pathway for crawling and discovering new content material, hyperlinks additionally act as authority alerts. Authority is decided by measuring alerts associated to the relevance and high quality of the pages linking into every particular person web page, in addition to the relevance and high quality of the pages to which that web page hyperlinks.

Search engine algorithms mix tons of of alerts with machine studying to find out the match between every web page’s context and authority and the searcher’s question to serve up a web page of search outcomes. A web page must be among the many prime seven to 10 most-extremely-matched pages algorithmically, in each contextual relevance and authority, to be displayed on the primary web page of search outcomes.